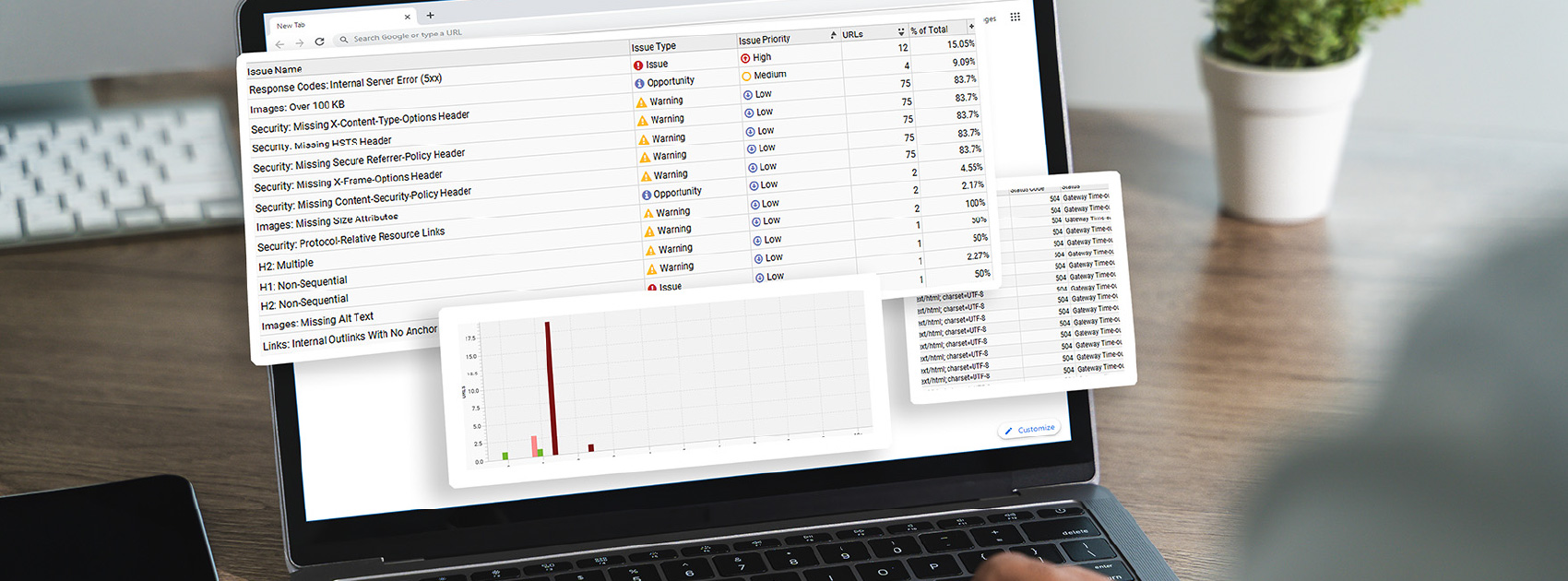

Is Crawl Waste Killing Your Website Performance?

If your website feels sluggish or your products aren’t appearing in search results, the issue might not be your content. It could be how search engines are crawling your site.

Search Engine Land recently shared insights from Garry Illyes of Google on the Search Off the Record podcast about the biggest website-crawling issues in 2025. Spoiler alert—two common (but avoidable) mistakes make up 75% of the issues when it comes to search engines crawling your site. This highlights a growing problem many businesses overlook: crawl waste. And it’s more common than you might think.

Most website performance issues come from how search engines crawl your site, with common but avoidable problems causing the majority of inefficiencies.

What Is Crawl Waste?

Crawl waste happens when search engine bots spend time crawling pages that don’t add value, rather than focusing on the pages you actually want indexed. When this happens, your site’s visibility, speed, and overall SEO performance can take a hit.

Crawl waste occurs when search engines spend time on low-value or duplicate pages instead of indexing your most important content.

The Biggest Culprits Behind Crawl Issues

According to Illyes, the majority of crawl inefficiencies come down to four main technical issues:

1. Faceted Navigation (50%)

Filtering options like size, color, and price can create endless URL combinations. While helpful for users, they can trap search bots in a loop of duplicate or low-value pages.

2. Action Parameters (25%)

URLs that change due to actions like “Add to Wishlist” or “Sort By” often don’t change the actual content. This confuses search engines and wastes crawl resources.

3. Tracking Parameters (10%)

UTMs, session IDs, and other tracking codes can generate multiple versions of the same page, again, without adding unique value.

4. Plugins & Widgets (5%)

Some third-party tools unintentionally create crawlable URLs that don’t serve a meaningful purpose.

Most crawl issues stem from faceted navigation, unnecessary URL parameters, tracking codes, and plugins generating redundant or low-value pages.

Why It Matters

Once a search bot gets stuck in a crawl loop, the damage is already done. Your site ends up paying the price in several ways:

- Increased server load

- Slower indexing of new or updated content

- Wasted crawl budget on irrelevant pages

This means that your most important pages may not be getting the attention they deserve.

Crawl inefficiencies waste resources, slow down indexing, and prevent your key pages from ranking effectively.

Technical Debt Is Real

Just like financial debt, technical debt compounds over time. Ignoring how search engines interact with your site can quietly damage your SEO performance and user experience. Managing crawl efficiency is a strategic, intentional task to add to your website maintenance list.

Ignoring crawl optimization over time creates compounding technical debt that harms both SEO performance and user experience.

How to Stay Ahead

To keep search engines focused on what matters:

- Audit your URL structure regularly

- Limit unnecessary parameters and dynamic URLs

- Use canonical tags and robots directives wisely

- Monitor crawl activity through tools like Google Search Console

- Evaluate plugins and third-party tools for unintended SEO impact

Regular audits, controlling URL parameters, and using SEO tools help ensure search engines focus on your most valuable pages.

Benefits of Partnering with a Trusted Marketing Agency for Your Website

If your site isn’t performing the way it should, it’s important to look deeper than your content. Optimizing how search engines crawl your website ensures your best content gets seen, indexed, and ranked faster.

By partnering with a professional marketing team for SEO services and website development and maintenance, you can ensure that your website is working for your business, not against it.

Working with an experienced marketing team helps optimize crawl efficiency so your website performs better and ranks faster.

FAQs

A crawl budget is the number of pages a search engine bot will crawl on your site within a given timeframe. If that budget is wasted on low-value or duplicate pages, your important pages may not get indexed quickly, or at all.

You may notice slow indexing, duplicate pages in search results, or unusual URL patterns in tools like Google Search Console. Server logs and crawl reports can also reveal inefficiencies.

Not inherently. They improve user experience, but if left unmanaged, they can create thousands of unnecessary URLs. Proper handling (like noindex tags or parameter controls) is key.

They can if not handled properly. While useful for analytics, they can create duplicate versions of the same page. Using canonical tags helps signal the preferred version to search engines.

Start with a technical SEO audit. Identify duplicate URLs, unnecessary parameters, and crawl traps, then implement fixes like canonicalization, robots.txt rules, and parameter handling.